As a 23-year veteran at Western Digital, David Hall describes his role as being an instigator. Though his title may be engineer fellow in HDD research and development, his best work comes from challenging norms to achieve innovation.

“My job is to kick things off, plow new fields, then move on to something else,” Hall said in an interview. “I like to be chief instigator, and challenge ways that we’ve always done things.”

One of those projects materialized today as Western Digital announced a flash-enhanced drive architecture called OptiNAND™, which includes an iNAND® universal flash storage (UFS) embedded flash drive (EFD). Not a hybrid technology, but a smarter, faster, denser storage drive that could reach an estimated 50TB of capacity this decade.

It’s an accomplishment that technology leaders at Western Digital, including Hall, say is unique to a company that can manufacture and vertically integrate both HDD and flash technology.

Ventures to implement NAND

Back in 2015, one of Hall’s projects was to investigate how to get more performance and storage space out of hard drives by using NAND flash, but not as a hybrid drive. A previous project Hall was part of combined flash and HDD drives in a hybrid storage approach, but it proved to not be a good fit for the market at the time. On this approach, he was interested in what else could be done with NAND to increase capacity and performance.

“A lot of times when you challenge the status quo, it turns out the status quo is what’s best, but I had a feeling this time I was on to something,” Hall said. Even in cases where nothing material comes from it, Hall added, there are often very good reasons for at least exploring what might be possible.

And the acquisition of SanDisk, which closed in May 2016, would provide a critical opportunity for vertical integration. By that time, Hall had already begun his research into NAND use cases, pricing trends, and potentials for increasing areal density and performance.

His initial executive pitch for potential uses of NAND and performance improvements received positive responses from peers and company leaders. But turning research into production would require efforts far beyond just his own, including bringing two large engineering groups from different technology houses together to work toward one goal. Strong collaboration between the NAND and HDD engineering groups would be crucially important.

For Mark Murin, a senior director in flash systems design engineering, that process specifically was a point of pride during the project. The process required serious rethinking around legacy design and legacy norms, he said.

“Two totally different engineering groups, coming up with the same goal, while every group makes changes to legacy design and legacy understanding — it’s something extremely valuable,” Murin said. “We had a common goal to create something very new, very successful. Every side was open to requests and to learning about new technologies.”

The end goal, ultimately, was to create a product that would help Western Digital customers keep pace with surging demand for data storage.

A growing need

Last year the world created or replicated some 64 zettabytes of data, according to IDC. And the amount of digital data created over the next five years will be greater than double what’s been created since the introduction of digital storage. Therefore, industries of nearly every sector worldwide will need efficient storage methods for a lot more data being created at an unprecedented pace.

Though it’s more complex than it sounds, in HDDs, the straightforward way to add more storage is to add more storage disks and more write heads. But doing so is not necessarily the most cost-effective way.

Bill Boyle, an engineer fellow of HDD research and development at Western Digital, said from the beginning of the OptiNAND project, there were two main focuses when it came to the architecture: those that increase capacity and those that increase performance.

“If we can increase the number of terabytes per drive, [customers] receive a benefit of cost per terabyte,” he said. “The other categories relate to the performance, how fast we can execute commands.”

Reimagining the use of DRAM

One of those performance enhancements is not exactly how fast something is done, but how often. Specifically, with adjacent track interference (ATI) refreshing, using NAND will cut down the frequency of refreshes needed.

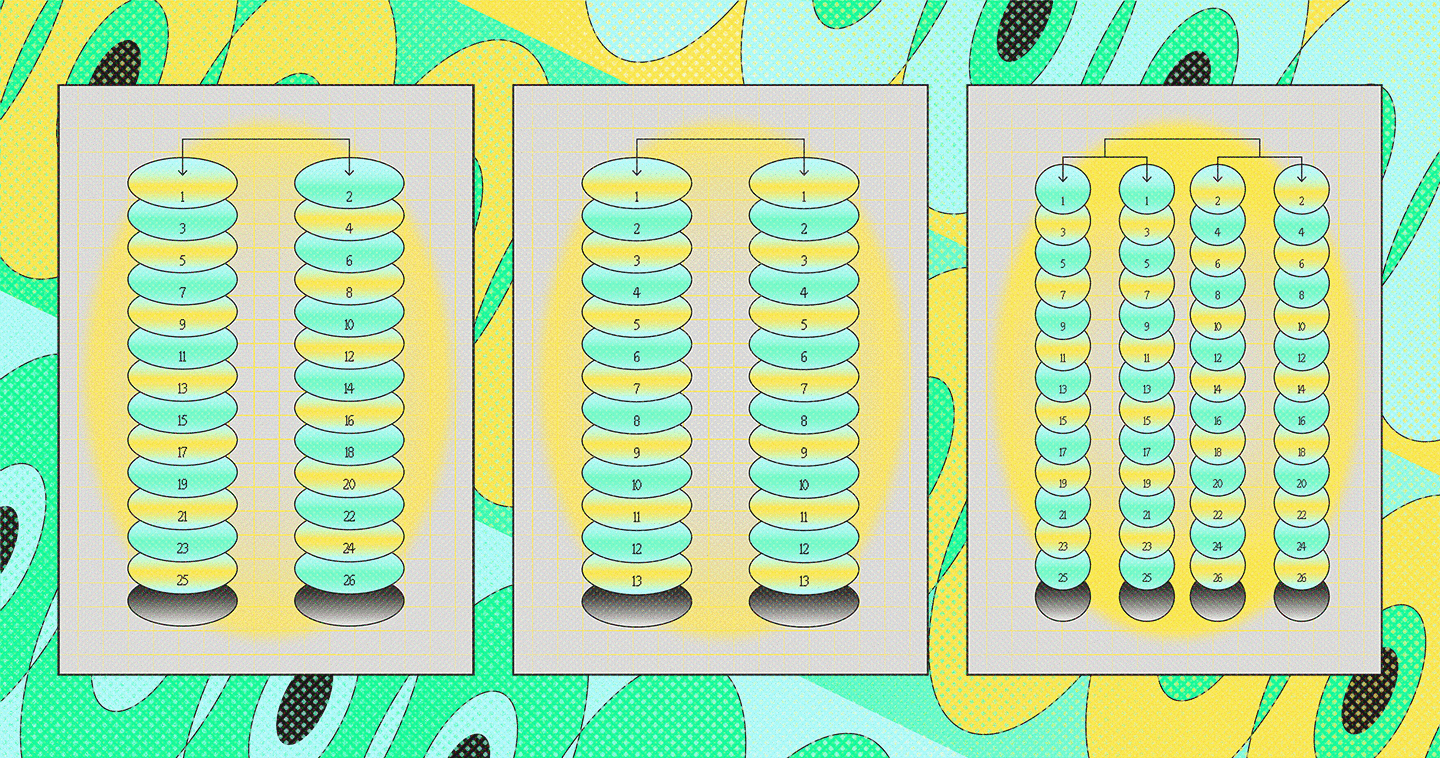

In addition to adding disks to a drive to gain capacity, another proven way to add capacity is to increase density by squeezing write tracks closer together. Imagine narrowing the lanes of a highway to fit more lanes of traffic.

However, a hard drive can only write so many times before there is a risk of magnetic interference on adjacent sectors to the one being written. The number of times a sector receives interference, when a sector has been rewritten, and the position of the write head are key drive metadata in that are stored in DRAM. The precision of the metadata is limited in DRAM, so the drive must sometimes infer — or make an educated guess — as to what precise track and position the data was written. To make sure the correct data is being accessed, the drive will go back and re-read the data, and then rewrite tracks to prevent any data corruption.

This entire process, which actually occurs very quickly, is called an ATI refresh. As tracks are moved closer and closer together to increase capacity, the number of writes allowed before a refresh must decrease to prevent any data corruption. Doing more refreshes increases latency, since the drive must take time to read and rewrite more often.

“It used to be, not that many generations ago, that you could write 10,000 times before needing to refresh sectors on either side,” Hall said. “And then as we pushed the tracks closer and closer together, it went to 100 then 50 then 10, and now for some sectors, it’s as low as six.”

It’s a delicate balance, because narrowing the write tracks too many times would cause an unacceptable performance impact. And that’s where the NAND enhancement in OptiNAND plays a key role.

iNAND was an obvious choice for integration: an existing, small form factor, swift, non-volatile, memory option that could store far more cache than DRAM or NOR flash.

Murin said when it came time to evaluate options, engineers on the HDD side were looking for a managed flash memory. “[iNAND] is a very small, large-volume, sufficiently fast, and already existing technology,” Murin said. “NAND is a denser, cheaper component than DRAM, although slower at writing. NAND, though, needs to be managed, and that is where iNAND comes in.”

Write-cache: enabled or disabled?

For some, it’s not important when data is physically written to disk. That the system has received the data and queued it for writing to non-volatile storage is validation enough. This is write-cache enabled.

For others, it’s of dire importance that nothing else be done with the drive until the data is written and stored safely on a disk. While it may take more time, a write notice means data has been safely stored in case of any power outage or other occurrence that might interrupt HDD operations. This is write-cache disabled.

By enabling write-cache, the drive can be a little more dynamic with its operations, because it can schedule writes and reads (and refreshes) in the most efficient order possible. Thus, performance will be faster. But there is that slim chance that, in a sudden power loss, the drive never actually has the chance to write the data to disk.

But with OptiNAND, the data will be saved to non-volatile NAND memory to prevent data loss.

“When we have non-volatile cache available, we have enough energy in the drive to take the data and write it to the NAND before any data is lost,” Boyle said. “When write-cache is disabled, we can leave it in DRAM knowing that if power is lost, we can make it safe.”

Say, for whatever reason, power is cut off during the write process on a large data batch. The System-on-a-Chip (SoC) of an OptiNAND drive — in under a second — will use the rotational power generated by the already spinning disks inside the drive to power internal capacitors until any cached data transfers to non-volatile NAND. Previously, without an iNAND component, that data would potentially be lost.

To prove there would no longer be a significant performance trade-off, Hall charted a plot of random writes in a simulator and could show that the difference in performance between write-cache enabled and that of write-cache disabled was practically non-existent.

“In some cases, you can get up to an 80% gain in performance,” he said. “So now those customers that run write-cache enabled that were running up against I/O limits, if they get a lot more performance, they can use a lot more of that capacity.”

Future roadmap

This certainly isn’t the endpoint, but the first waypoint on a roadmap of features and product updates Western Digital plans to implement. As it is, Hall and other leaders at Western Digital are expecting the capacity for an individual drive to reach 50TB before the end of the decade.

For now, as the new architecture is entering its final development stages, the company is trialing it with select HDD customers, but made clear that it intends to use OptiNAND across many segments in the future, including hyperscale, cloud, smart video, NAS, professional external storage, platforms, JBODs, cold storage, and others.

As it is, the OptiNAND architecture is a triumph of collaborative efforts from both the HDD and NAND sides of Western Digital. The project involved nearly every discipline and expertise of each business, and a lot of challenging of norms.

When asked how features are decided, Hall said it’s a matter of trusting the team and removing barriers to brainstorming the best ideas.

“You open up the door and remove a constraint to the system,” he said. “Say, ‘Yes, this is the world we’ve all been living in, but what can we do if we just remove this constraint?’ From this point, new opportunities can be envisioned, vetted, and ultimately implemented.”

To find out more about OptiNAND, see the press release here and the technology brief here.