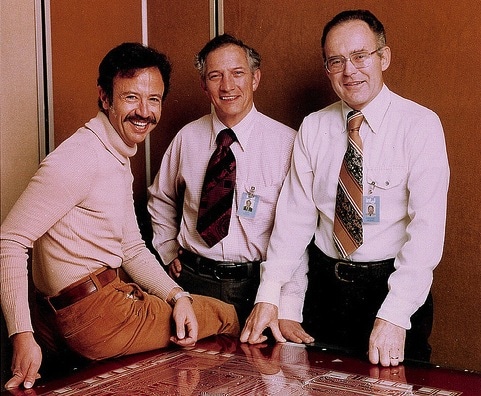

Moore’s Law drives the innovation we take for granted every day – from smartphones to the Internet to big data. For those unfamiliar with Moore’s Law, it was first identified by Gordon E. Moore, the co-founder of Intel, in a paper he wrote in 1965 observing that over the history of computing hardware, the number of transistors on integrated circuits doubled approximately every two years. The Law further predicted that pace would continue in the future. After nearly 50 years, it is amazing how this “Law” continues to ring true for CPU and memory performance, allowing them to continue to scale to meet the needs of today’s data hungry environments. (Even Moore himself thought the theory would uphold for only ten years.)

However, when it comes to an equally important piece of the data management chain, storage, the industry has found itself bumping up against a wall. Traditional storage devices of spinning hard disk drives have held true with Moore’s Law as far as capacity, but in some cases performance has actually slowed down as capacities grew. It was more than 10 years ago that the first 15K RPM hard drive was introduced into the market, but we have yet to see 30K or even 20K RPM drives. The reasons for that are the mechanical limitations that prevent hard drives from achieving faster rotational speeds.

Over the years an exponential gap has grown between the advancements of CPU and memory, and what storage can handle. As CPU, memory and networking all continued to follow Moore’s Law and double performance about every two years, hard drive performance is the laggard with Moore’s Law (really Kryder’s Law) helping hard drive density but not performance. Server and Storage vendors invest heavily in controllers that use processors, DRAM and other techniques to work around the hard drive bottleneck, but those can only help so much.

Over time, this difference in performance creates major inefficiencies, requiring rearchitecting or rewriting applications for balanced system utilization. But with flash, the fix is easier!

How We’ve Advanced

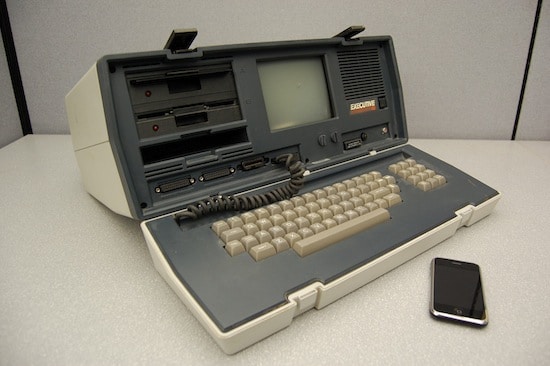

Let me compare where we’ve advanced since Hadoop was introduced (and how far HDDs have fallen behind): In 2006 when Hadoop was first used, a typical 2U server had 3.8 GHz 2 core CPU with two 1-Gigabit Ethernet ports, and a maximum of 8 GB DRAM.

Now, in July 2014, the typical 2U server has 3.5GHz CPU with 12 cores(!), offering six times the performance and 32 GB DRAM that’s 4X faster. The network has also had a massive performance improvement with a 10xfaster offering via dual 10GbE network adapters standard.

The only part of that system that hasn’t gotten much faster is HDDs. They are denser, offering more capacity, but with no significant change in random IO performance. This has created a distortion from Hadoop was first architected, shifting the bottleneck to storage.

Architecting to Overcome Storage Bottlenecks

To overcome these challenges in data centers, there have been two possible approaches – either stitching together massive amounts of HDDs to achieve the required IOPS (input/output operation per second), or re-architecting applications to meet storage hardware limitations (including slowing them down!).

Let’s take Hadoop as an example. Hadoop was designed to balance CPU, memory, networking and storage so that IT managers could benefit by using a cluster of the lowest cost server nodes as an alternative to buying a very expensive mainframe computer. But as the industry’s hunger for data grows, traditional approaches simply can’t act as a solution without scaling to unrealistic levels. A new storage approach is needed if we are to re-balance the data center and alleviate the bottleneck presented by storage.

The Good News

The good news is that Solid-State Drives (SSDs) have brought storage back in line with Moore’s Law. If we look at the progression of solid-state drives for the enterprise over the last few years, we can see both performance and capacity following the proper curve more closely. For instance, just three short years ago the average SSD achieved around 250MB/s throughput and capacity topped out at about 512GB. Today, SanDisk® delivers enterprise-grade SAS solid-state drives achieving 1000MB/s data transfer, and reaching up to 4TB of capacity in a standard 2.5-inch form factor. And it’s not stopping here. We will continue to see performance gains and greater capacity points as flash lithography continues to get smaller, and as we see the introduction of 3D NAND and other developments on the horizon come to fruition.

Take a look at the chart below illustrating the dramatic gap that has grown between HDD performance, which has remained almost static, and SSD performance over the years, specifically for random read/write. HDD performance has improved by only 13%, while SSD performance improved by 400-500%! And we’re not slowing down.

HDDs Are Breaking the Law (of Moore), but not by being too fast, but rather by being too slow. While other chip-based technologies like processors and flash storage speed by, HDDs are receiving traffic tickets by blocking traffic in the slow lane.

What are your thoughts on storage and Moore’s Law? Let us know by joining the conversation with @SanDiskDataCtr.

This blog was written by Steve Fingerhut, Former Vice President of Marketing, SanDisk Enterprise Storage Solutions