By Martin Fink, CTO

In today’s environment, the need for innovation has never been greater. Especially as data creation continues to grow exponentially, and the value of data expands with it. Because of that, I am especially proud of our announcement today at the 7th RISC-V Workshop: we will transition future core, processor, and controller development to the RISC-V open, free instruction set architecture (ISA). After a few years, we expect to ship two billion RISC-V cores annually – a significant commitment to enabling innovation.

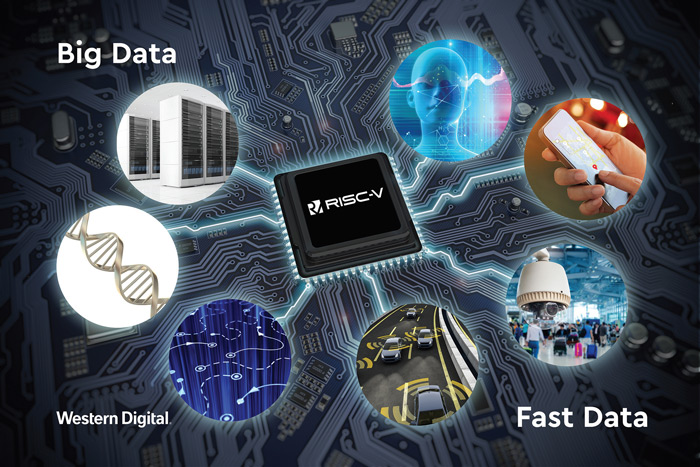

The Shift: Big Data and Fast Data

There has always been value in capturing, preserving, and accessing data – from the beginning of the written word and the Alexandria Library. With modern technology, it has become easier to manage and extract the value from data. However, today’s data is doubling every two years with the volume of data-generating sources from mobile devices, machine sensors, IoT devices, healthcare monitors, and of course, existing applications. The amount of data we’re generating will reach hundreds of zettabytes in the next decade – in fact, there will be so much data, with so much inherent value, that it will require ever-extending storage.

Beyond the quantity of data, there’s the speed at which it’s growing and being accessed.

This is what is known as Big Data and Fast Data, and it requires new thinking and innovation. Big Data – the very large data sets that are analyzed via computations and algorithms – often yields insights that can achieve better outcomes. Fast Data, on the other hand, is processed as it’s generated and captured for analysis. This most often leads to real-time decisions and results, allowing applications to respond to events as they happen.

Together, Big Data and Fast Data form a mutually dependent relationship. Big Data applications deliver optimized algorithms which lead to better decisions made by Fast Data applications; Fast Data applications in turn generate more data from more data sources, fueling larger Big Data environments.

Facing the Limits of General Purpose ISAs

With Big Data and Fast Data, general purpose instruction set architectures (ISAs) can’t always get the job done. Traditional infrastructure and system architectures are reaching their limits – a ‘one size fits all’ approach fails to meet the workload demands of increasingly diverse applications in a data-centric world.

Purpose-built ISAs that allow for independent scaling of resources is key to succeeding in data-rich environments. Tomorrow’s data-centric compute architectures will need the ability to scale resources independent of one another. We’ll need architectures that go beyond the limited resource ratios of general-purpose compute architectures to optimize processing, memory, storage, and interconnect.

Accelerating the Next Generation of Apps

RISC-V – a modular, open architecture – enables the industry to extend its general-purpose foundation to purpose-built workloads by adding new instruction set modules tailored to those workloads.

It can be scaled to the exact need of any given application, from IoT devices, to embedded System on Chip, as well as data center CPUs and accelerators, while maintaining common open source software ecosystem support across all applications. Designed for efficient scaling, you can add as much memory as you need for a particular application environment.

Open and scalable architectures enabled by RISC-V are important: they will help accelerate the deployment of data-centric applications for Big Data and Fast Data environments. Bringing compute power closer to the data minimizes the movement of data at the edge thus optimizing processing, enabling smart machines and artificial intelligence, and providing a new class of low-power processing designs for the next generation of applications.

How RISC-V Works

Comprising the machine language and I/O model of a computer or computing system, the ISA literally defines everything that a programmer needs to know in order to program it. RISC-V is an open ISA based on established reduced instruction set computing (RISC) principles. Its attribute set enables lower cycles per instruction (CPI) than a complex instruction set computer (CISC) architecture, mostly because it’s based on a small set of simple and general instructions, versus a large set of complex and specialized ones, targeted at OS and application processing.

Why RISC-V Will Change the Game

It’s simple. Because of its open, modular approach, RISC-V is ideally suited to serve as the foundation of data-centric compute architectures. As an OS processor, it can enable purpose-built architectures by supporting the independent scaling of resources. Its modular design approach allows for more efficient processors for edge and mobile systems.

Additionally, RISC-V can be freely used for any purpose – anyone can design, manufacture, and sell RISC-V chips and software. RISC-V and any CPU core designs are freely available under a Berkeley Software Distribution (BSD) license.

I look forward to seeing how people use RISC-V to find ways to bring new ideas and innovations to data. Our goal at Western Digital is to create environments in which data can and will thrive. We believe that leveraging RISC-V open ISAs in our future core, processor, and controller development will do just that.

To learn more about what we’re doing with RISC-V and how we see it enabling data to thrive, please visit westerndigital.com/company/innovations/risc-v.

You’ll find more information and specifications on the RISC-V site.

And tell us what you think on Twitter: @westerndigital or @risc_v.