Ask any data center architect what keeps them up at night and they will likely mention downtime and how to avoid it. In order to plan for anticipated usage, companies often throw money at the problem, over-spending on IT resources that may sit idle most of the time. Sure, there might be peak loads that come with seasonal upticks or promotions and it’s important to be ready for those, but what about the rest of the time?

Just as a hotel manager strives to maximize capacity, so too does IT aim to maximize utilization of its IT investments, minimizing idle usage. Yet businesses generally see more than half of their IT equipment sitting idle, with utilization even as low as 15%. The introduction of the cloud has enabled a shift to dynamically available resources that has touched nearly every industry. With a click of a button, any sized business can build a virtual environment with the exact amount of resources they need. And, purging those resources is just as easy.

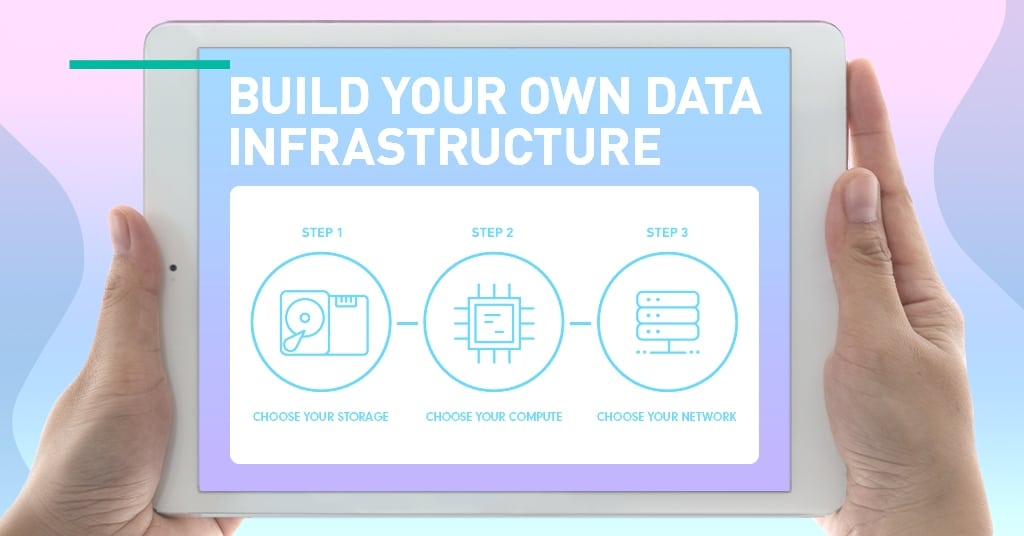

Now, that same ability to build virtual application environments on-the-fly is available for on-premises data centers in the form of composable disaggregated infrastructure (CDI). Similar to how large clouds are architected, CDI enables resources such as compute, networking, storage and GPUs to be pooled together as needed, then released for another workload to use.

“There’s an evolution happening in data center transformation,” said Sumit Puri, CEO of Liqid, a software-defined composable infrastructure platform provider. “It’s a move from static to dynamic.”

A Slice of Available Resources, Custom-Made with the Right Ingredients

In the past, organizations had to define what their IT infrastructure would look like at the point of purchase, planning three to five years ahead, with the hope they got it right. But as Puri explained, “now, the move is to dynamically configured infrastructure where hardware is disaggregated and you can compose servers based upon pools of hardware in a very dynamic way.”

“It’s difficult to anticipate storage, network and compute needs in an evolving data center,” said Scott Hamilton, senior director, platforms product management and marketing

at Western Digital. As new applications are onboarded, the past norm was to just buy more servers with more storage (and more compute). Hamilton explained that “very quickly an organization can wind up with a multitude of processing platforms and software licenses that, while required for peak demand, go mostly unused and underutilized.” And with today’s unprecedented data growth and unpredictable future needs, it’s no longer affordable to buy infrastructure just for the peaks.

Sounds Like a No-Brainer. Why Haven’t We Done This Before?

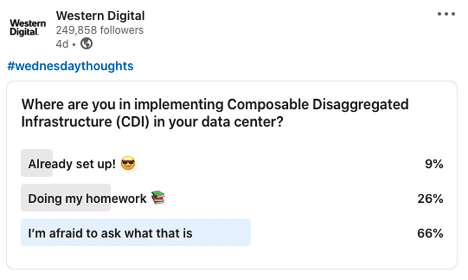

A recent poll on Western Digital’s social media channels revealed that few people are aware of this new architectural approach.

According to Puri, when his customers hear about CDI, their first responses are: “Is this magic? You’re telling me I can add SSDs to a running server with four keystrokes or the click of a mouse?” After they get over the disbelief, the next thing they tend to ask is “why haven’t you done this before?”

While the concept has been building for the past 15-20 years, CDI is just now coming of age. That’s because it wasn’t possible without advancements of new networking technologies like NVMe™ over Fabrics (NVMe-oF™) or the connectivity of PCIe gen3.

What CDI Brings to the Table

Efficiency is the biggest breakthrough CDI enables.

“The biggest knob you can turn around TCO efficiency in the data center has to do with the hardware utilization rates,” said Puri. “If I have $100K of hardware I may be only getting 20% utilization out of that with a static, fixed infrastructure. But if I can break that static connection and move hardware around as needed, I can now squeeze 40%, 50% or 60% utilization out of that same $100K investment.”

Greater efficiency means using fewer resources to do the same amount of work. A company might have 15 divisions that all want to perform analytics, but not at the same time. Instead of purchasing 15 GPUs, each of which are only used 15%-20% of the time, they can instead opt to purchase a shared pool of five or six, and each division can use them as needed.

But it’s more than efficiency alone.

Keeping Pace with the Velocity of Change

According to Hal Woods, storage industry technologist and a former CTO, agility is of the key benefits of CDI. As data center requirements grow, IT needs to be able to react quickly to changing business conditions, threats or opportunities. “We all know change is a constant,” said Woods. “With CDI, I can respond to change quickly and adapt to changing workloads. And as things progress, I can even change my mind!”

Furthermore, CDI is able to sustain the velocity of change in the data center. The goal is to keep up with the fast pace of development so that infrastructure is not a business bottleneck. Armed with data from real workloads, infrastructure can be recomposed to better meet the needs of the business.

“With the pace of change, traditional IT is challenged by keeping up with the complexity of data and applications. CDI can be a business enabler,” said Hamilton, providing an example from one of Western Digital’s customers: the ICM Brain and Spine Institute.

The ICM Brain and Spine Institute leverages Western Digital’s OpenFlex™ open composable platform to resize and reallocate storage volumes on demand, assisting the institute’s fight against neurological disorders. According to ICM CTO Caroline Vidal, by leveraging OpenFlex CDI “we can provide fast, low-latency access to imaging data, in up to four times the resolution than researchers could work with before. The shared storage with NVMe-oF is just there when our scientists need it, so they can focus on using the data and advancing their research.”

ICM’s IT team can quickly and easily allocate storage to meet any researcher’s need and resize and reallocate storage volumes on demand. This flexibility is essential as the institute adds more microscopes and other instruments in the coming years, continually driving up the resolutions and volumes of clinical imaging data. The ability to seamlessly add more resources as technology advances allows ICM to futureproof its IT model and investment.

The Data Center of the Future

CDI, while originally driven by the cloud titans, is making its way into industries such as healthcare, financial services, higher education, government and research. But it’s today’s workloads – such as AI, IoT at the edge, and more – that are driving adoption.

CDI brings efficiency, agility and the ability to withstand velocity to both on-premises data centers and cloud service providers beyond the cloud titans. With CDI, companies can avoid over-provisioning, stranding capacity, and take the guesswork out of planning for peaks. Companies can be nimble and avoid unnecessary spending to optimize IT resources and keep pace with change.