There is an old saying that “there is no such thing as a free lunch.” So, whether you offer free or paid cloud services, it is critical to maintain the lowest cost possible, especially as you find ways to monetize the service.

In the world of scale-out cloud services, whether you are building the next hiking app, bicycle ride-sharing app, or expense tracking app for corporate travelers, minimizing or reducing your total cost is important.

How to Approach Total Cost of Ownership

The “T” in TCO represents the TOTAL cost of ownership, and sometimes determining all the costs of a given solution can be daunting. However, there are ways to simplify the analysis.

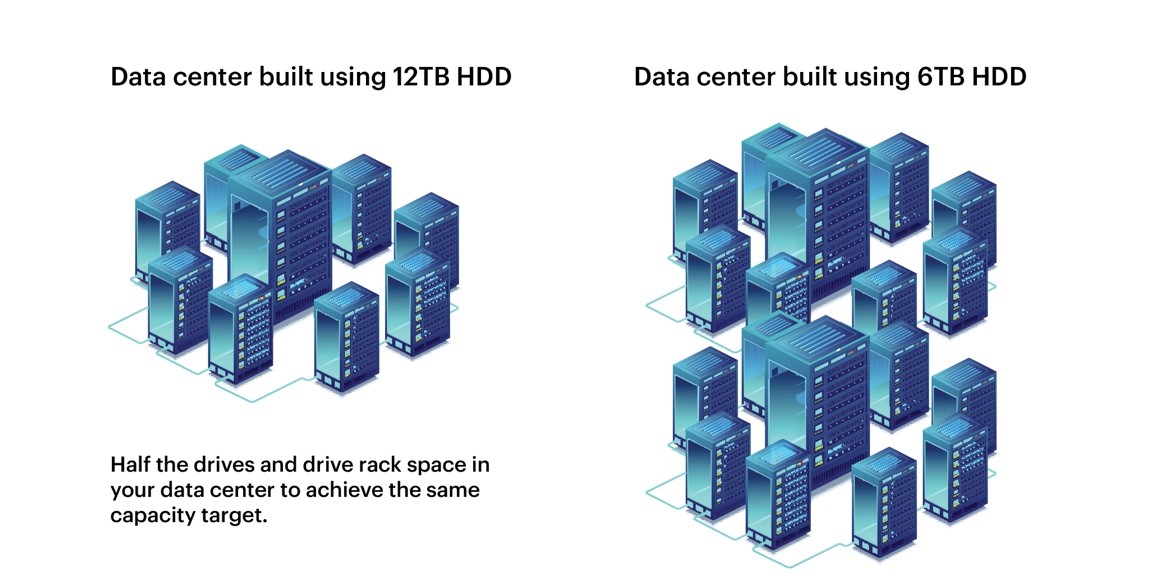

One simplified way to think of TCO is to look at how much you save when comparing two options. For example, if you build your data center with 12 TB[1] hard drives instead of 6 TB, you need only half the drives and drive rack space in your data center to achieve the same capacity target.

The smaller data center solution eliminates substantial costs.

- OpEx is reduced from the building lease or construction costs. Heating and cooling expenses are reduced, and there are no maintenance expenses for the drives that don’t exist!

- CapEx is reduced when substantial non-hard drive equipment is eliminated. This includes networking and server equipment, as well as the racks and enclosures that contain the hard drives.

One way to achieve data center footprint reduction is by deploying the highest capacity storage available for each new data center, and the cost savings can then be calculated.

The largest public cloud service providers are compelled to build the most cost-efficient data centers by using the highest capacity hard drives, and the hard drive industry is racing to provide the next leap in higher capacity. We’re proud to have announced the first 15TB HDD just a few weeks ago.

See how Dropbox uses the highest capacity and SMR to achieve cost savings

Even if you are only deploying a rack, the same principals apply: two racks versus one rack, or two JBODs instead of one.

Achieving the lowest $/TB cost can almost always be found by utilizing the highest capacity hard drive available, but what is the catch, and why are smaller hard drives still used today?

The obvious reason is performance. If you pay for two fully connected JBODs, you will clearly get double the performance from spending twice as much on all of the data pipes. But that performance comes at a premium cost, and the data center architect’s task is to balance cost with true performance requirements. Success can be achieved by finding ways to cost-effectively mitigate performance issues by taking the TCO savings gained from using high capacity hard drives and applying a portion of it to increase performance within the reduced data center footprint. Using SSDs or more powerful servers to eliminate critical bottlenecks and creating caching layers can help.

Also, using smaller hard drives makes sense when your data sets are just not that big. Exponential data growth is the universal trend, but there are many valuable data sets that just don’t require “big data” to process. Although, it is worth examining whether or not the data set is artificially limited because “someone thought” it was too expensive to store all data available, which could be unfortunate because more data might yield more insights.

TCO Example: Enterprise-Scale Ceph™ Cluster Using High Capacity Hard Drives

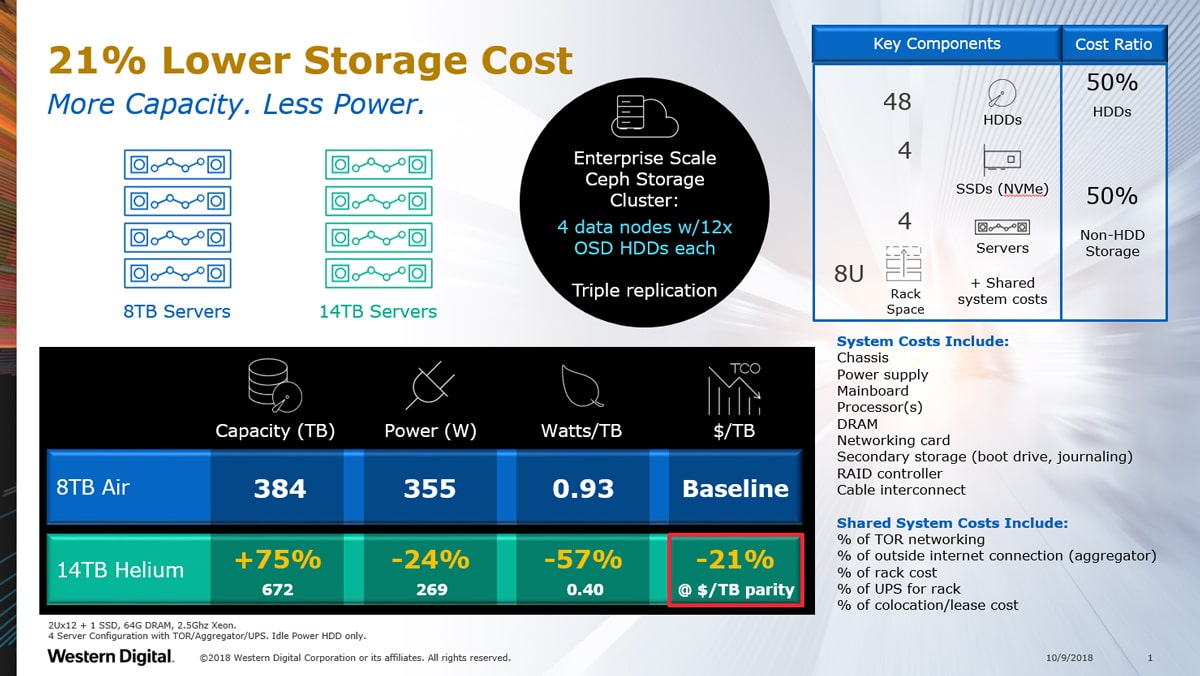

Let’s now apply this information to an example with our 14TB Ultrastar® DC HC530 hard drive, which offers unsurpassed capacity and delivers the most cost-effective non-SMR storage for data centers.

Our base case is a Ceph object storage cluster design using 8TB Ultrastar air-based drives and compares that to a similar cluster using 14TB helium-based drives. We will simplify some of the calculations using readily available data.

Let’s start with the basic storage node containing 12 HDDs using a 2U enclosure (2Ux12) that includes a dual-processor main-board, a host bus adapter for connecting 12 HDDs, Ethernet connections, and a power supply. The budget is $3100. Today, you can pick up 12x 8TB hard drives for about the same cost.[2]

| Case 1 with 8TB (50:50) | Case 2 with 14TB (65:35) | |

| HDD Storage Cost | $3100 (12x 8TB HDDs)= 96TB | $5400 |

| Enclosure, Server and Overhead Cost | $3100 (12x 14TB HDDs) =168TB | $3100 |

Case 1: Starts with a 50/50 split between the hard drive cost, and all “other” costs. “Other” costs include the obvious cost of the enclosure, main-board, host bust adapter and networking connections. However, you must also consider the additional cost of adding a 2U data node into the data center. The node will take up rack space, power conditioning slots, as well as the top of rack switch (TOR). The “slot tax” for adding a data node must be included.

Case 2: Exact same configuration, but with 12 larger 14TB Hard drives. For this simple model, we will assume that the pricing for each drive is equivalent on a $/TB perspective. (i.e. the 14TB delivers 75% more capacity than an 8TB hard drive, and the assumption is that it costs 75% more than the 8TB as well, resulting in approximately a 65:35 split.)

The 14TB solution delivers 75% more capacity but cost 21% less on a $/TB basis!

More Savings with Helium

Furthermore, the raw power of a hard drive is reduced when moving from air to helium. The 14TB solution using the Ultrastar DC HC530 delivers 26% power savings when looking at HDD idle power consumption. If you consider the power cost on a Watts/TB basis, this portion of your Opex is reduced by 58%. This means your drives use half the electricity when they are just idling. Thus, you cut the Opex cost of storing large amounts of data in half.

Yes, there is an absolute cost increase of 38% for each node, but as you scale out you can recover this by either building out fewer nodes or offering more raw capacity in the same footprint.

TCO Calculation for System and Shared System Costs

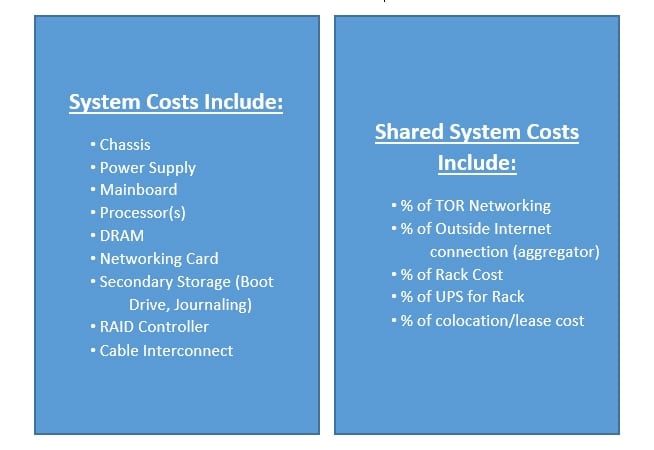

After you determine the ratio of hard drive costs to other costs (HDD:Other) you can quickly make a similar TCO calculation for different configurations that are either lighter or heavier on the storage component.

Just remember that “other” needs to include “ALL other” costs. This includes both system costs and shared-system infrastructure costs.

System costs can be derived from the bill of materials for the storage system being built, including all of the physical components.

The shared-system costs include the interconnection of the system to the rest of the data center, as well as the slot tax for the entire chassis.

At scale, it is obvious why the largest public cloud data centers choose the highest capacity hard drives to lower their storage cost. However, whether you are building a hyperscale data center or a simple 4 node Ceph cluster, choosing higher capacity HDDs can lower your cost/TB by reducing “other” expenses. This, in turn, provides an option to either adjust your total spend or use the savings to mitigate performance concerns by utilizing SSDs or more storage, or simply offer more storage capacity.

Learn More

[1] One MB is equal to one million bytes, one GB is equal to one billion bytes and one TB equals 1,000GB (one trillion bytes) when referring to hard drive capacity. Accessible capacity will vary from the stated capacity due to formatting and partitioning of the hard drive, the computer’s operating system, and other factors.

[2] Values based on publicly available prices as of October, 2018.