The world’s fastest supercomputer requires 21 million watts of power. Our brains, in comparison, hum along on a mere 20 watts—roughly the energy needed for a light bulb.

For decades, engineers have been fascinated by how our brains compute. Sure, computers will outperform us in their capacity for mathematical calculations. But they struggle with tasks the human brain seems to handle effortlessly.

Why is that?

Computing like the brain

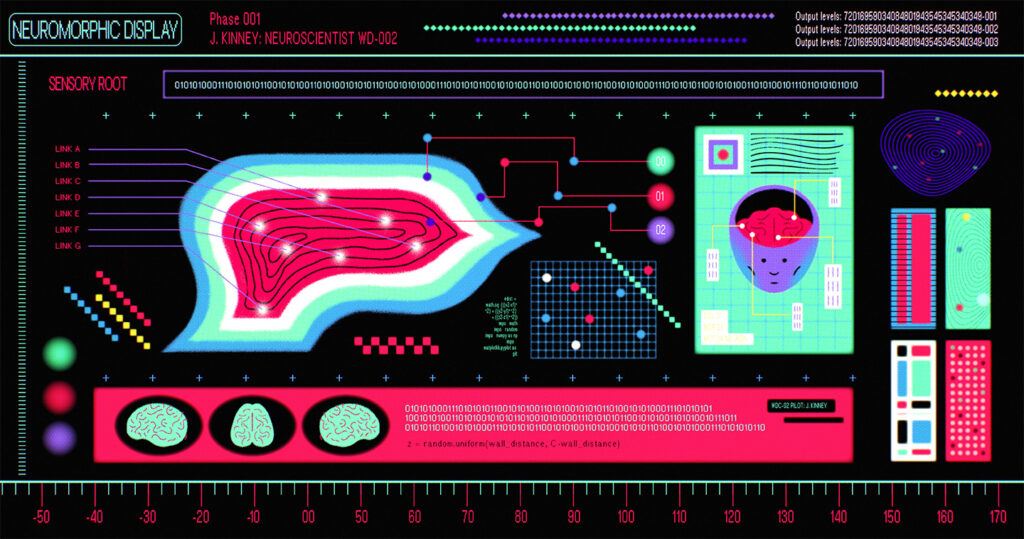

Justin Kinney, a neuroscientist, bioengineer, and technologist in Western Digital’s R&D, explained, “No one understands how the brain works, really.”

Kinney would know. He has spent much of his career trying to unravel the brain’s secrets. Engineer turned neuroscientist, Kinney hoped to join the neuroscientist community, learn what they already knew, and then apply it to computing.

“Much to my dismay, I found neuroscientists don’t actually understand the brain. No one does. And that’s because there’s little data,” he said.

The brain is regarded as one of the most complex known structures in the universe. It has billions of neurons, trillions of connections, and multiple levels ranging from cellular to molecular and synaptic. But the biggest challenge is that the brain is difficult to access.

“The brain is encased in a thick bone,” said Kinney, “and if you try to access, poke, or prod it, it will get really upset and hemorrhage, and delicate neurons will die.”

Nevertheless, Kinney said progress is being made on various fronts, particularly in the field of recording brain activity, which is good news for those trying to build brain-like computers.

“What we’ve learned is that there are similarities in computing principles when it comes to how neurons communicate and how we use electronics and circuits to do functional tasks and manipulate digital information,” said Kinney.

“Ultimately, we’d like to build next-generation computing hardware utilizing all the brain’s tricks for efficient computing, memory, and storage.”

Neuromorphic computing

Dr. Jason Eshraghian is an assistant professor at the Department of Electrical and Computer Engineering at the University of California, Santa Cruz (UCSC) and leads the university’s Neuromorphic Computing Group.

Neuromorphic computing is an emerging field focusing on designing electronic circuits, algorithms, and systems inspired by the brain’s neural structure and its mechanisms for processing information.

Eshraghian emphasizes that his goal isn’t about replicating biological intelligence, though. “My goal isn’t to copy the brain,” he said. “My goal is to be useful. I’m trying to find what’s useful about the brain, and what we understand sufficiently to map into a circuit.”

One area that has been a particular focus for Eshraghian is the spiking mechanism of neurons. Unlike the constant activity of AI models like ChatGPT, the brain’s neurons are usually pretty quiet. They only fire when there is something worth firing about.

Eshraghian asked, “How many times have you asked ChatGPT to translate something into Farsi or Turkish? There’s a huge chunk of ChatGPT that I personally will never tap into, and so it’s kind of like saying, well, why do I want that? Why should that be active? Maybe instead, we can home in on the part of the circuit that matters and let that activate for a brief instant in time.”

On his path toward brain-like computing, Eshraghian embraces another trick of the brain: the dimension of time—or the temporal dimension. “There’s a lot of argument about how the brain takes analog information from the world around us, converts it to spikes, and passes it to the brain,” he said. “Temporal seems to be the dominant mechanism, meaning that information is stored in the timing of a single spike—whether something is quicker or slower.”

Eshraghian believes that taking advantage of the temporal dimension will have profound implications, especially for semiconductor chips. He argues that, eventually, we’ll exhaust the possibilities of 3D vertical scaling. “Then what else do you do?” he asked. “What I believe is that then you have to go to the fourth dimension. And that is time.”

Brain-like hardware

Building on spiking and temporal mechanisms, Eshraghian and his team have developed SpikeGPT, the largest spiking neural network for language generation. The neural network impressively consumes 22 times less energy than other large deep learning language models. But Eshraghian emphasizes that new circuits and hardware will be vital to unlocking its full potential.

“What defines the software of the brain?” he asked. “The answer is the physical substrate of the brain itself. The neural code is the neural hardware. And if we manage to mimic that concept and build computing hardware that perfectly describes the software processes, we’ll be able to run AI models with far less power and at far lower costs.”

Since the dawn of the information age, most computers have been built on the von Neumann architecture. In this model, memory and the CPU are separated, so data is constantly cycling between the processor and memory, expending energy and time.

But that’s not how the brain works. Brains are an amazingly efficient device because neurons have both the memory and the calculation in the same place.

Now a class of emerging memories—Resistive RAM, magnetic memories like MRAM, and even memories made of ceramic—are showing potential for this type of neuromorphic computing by having the basic multiplications and additions executed in the memory itself.

The idea isn’t farfetched. Recent collaborations, such as Western Digital’s collaboration with the U.S. National Institute of Standards and Technology (NIST), have successfully demonstrated the potential of these technologies in curbing AI’s power problem.

Engineers hope that in the future, they could use the incredible density of memory technology to store 100 billion AI parameters in a single die, or a single SSD, and perform calculations in the memory itself. If successful, this model would catapult AI out of massive, energy-thirsty data centers into the palm of our hands.

Better than the brain

Neuromorphic computing is an ambitious goal. While the industry has more than 70 years of experience computing hard digital numbers through CPUs, memories are a different beast—messy, soft, analog, and noisy. But advancements in circuit design, algorithms, and architectures, like those brought about by Western Digital engineers and scientists, are showing progress that’s moving far beyond research alone.

For Dr. Eshraghian, establishing the Neuromorphic Computing Group at UCSC is indicative of the field’s shift from exploratory to practical pursuits, pushing the boundaries of what is possible.

“Even though we say that brains are the golden standard and the perfect blueprint of intelligence, circuit designers aren’t necessarily subject to the same constraints of the brain,” said Eshraghian. “Processors can cycle at gigahertz clock rates, but our brain would melt if neurons were firing that fast. So, there is a lot of scope to just blow straight past what the brain can do.”

Kinney at Western Digital concurs. “We theorize that some of the details of brains may be artifacts of evolution and the fact that the brain has to build itself. Whereas the systems that we engineer, they don’t have that constraint yet,” he said.

Kinney hopes that by exploring computing functions through materials we can access—silicon, metal, even brain organoids in a dish—we may coincidentally uncover what happens in the brain.

“I believe the question of power efficiency will help us unlock the brain’s secrets, so let’s go chase that question,” he said.

“How does the brain do so much with so little?”

Artwork by Cat Tervo